Air-Gapped Hardware Wallets and FUD - 2

Is an air-gapped Bitcoin hardware wallet more secure than non-air-gapped? Or is it just inconvenient security theater? A discussion of the claims of the article “Does airgap make Bitcoin Hardware wallets more secure?” (Bakkum, Shift Crypto AG, 2021-10-27) (a provider of a fine Bitcoin non-airgapped hardware wallet: BitBox02).

précis:

Did the successful and notorious Stuxnet attack succeed because of insufficient inspection of the data being exchanged?

Yes, in a trivial sense it does show this. But it is a poor and misleading example in the context of air-gapped hardware wallets, which exchange data via SD Card or QR code.

The article claims:

The security of an air-gapped system fully relies on the fact that the exchanged data is not malicious or maliciously altered during transfer. As famously demonstrated by the Stuxnet malware that sabotaged an Iranian uranium enrichment facility, not thoroughly inspecting the exchanged data can render security benefits moot, for nuclear factories or for cryptocurrency hardware wallets.

This is correct but misleading: The security of every system, air-gapped or not fully relies on properly, correctly, completely, and always validating all data inputs. It is failure to do this that has plagued us with the long list of known attacks caused by carefully crafted invalid data packages, leading to buffer overruns, resource leaks, crashes, out-of-range arguments being accepted, etc. etc. etc. that have affected operating systems and desktop/mobile/web/server applications, and computers in datacenters, phones, other devices in your home and office, and, yes even hardware wallets.

So what happened with Stuxnet? It is not an example of omitted or bad validation of data inputs crossing the communication channel, as described above. The airgap the Stuxnet malware crossed was supposed to be the entire nuclear enrichment facility. Some employee there breached that airgap by taking an ordinary USB stick out of their computer outside the airgap - where it had been infected - and plugging it into a computer inside the airgap where it could proceed to infect computers there in the typical ordinary fashion because, basically, computers are designed to run code off of USB sticks. Nothing in that - nothing at all - suggests that you can transfer arbitrary executable code (i.e., malware) optically (via QR codes) to a reader that is expecting to read a bitcoin transaction.

So Stuxnet didn’t involve “exchanged data” that was “maliciously altered during transfer.” Yes, it was malicious data however: a total garden variety 1 virus spread by an infected USB thumb drive. It did need to cross an air gap.

Later the article follow up with this:

As taught by the Stuxnet example above, no communication channel by itself prevents the sending or receiving of data that is different than the expected bitcoin transaction data.

But this isn’t what is taught by the Stuxnet example, which instead is a lesson on human fallibility even in the face of dire personal consequences 2 for failure to follow the rules (along with other such examples as the 2008 Chatsworth, CA mass-fatality train wreck and the 1994 Fairchild B-52 crash).

So why use Stuxnet as the example? Maybe, because it is scary?

Is converting an existing desktop computer to a dedicated air-gapped device inherently flawed because it is “open to many attack vectors”?

Now the article goes on to explain the price of not validating data properly (in this case, partially signed bitcoin transactions, or PSBTs). That discussion is fine (except for the (rather) strong implication that this is a problem affecting only air-gapped wallets: it affects all wallets). But then, it makes another interesting claim:

Converting an existing desktop computer into a dedicated air-gapped device, although sometimes recommended, is hard to secure and open to many attack vectors. [My emphasis.]

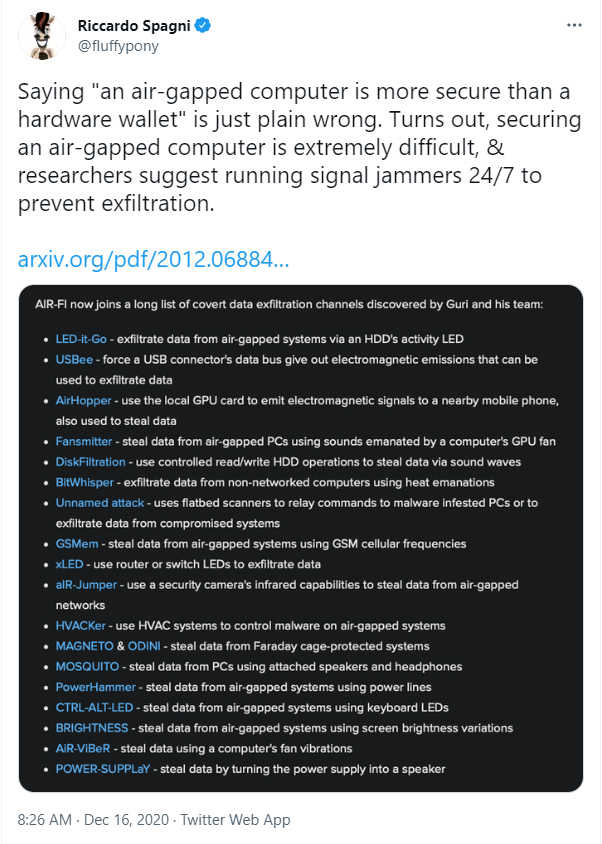

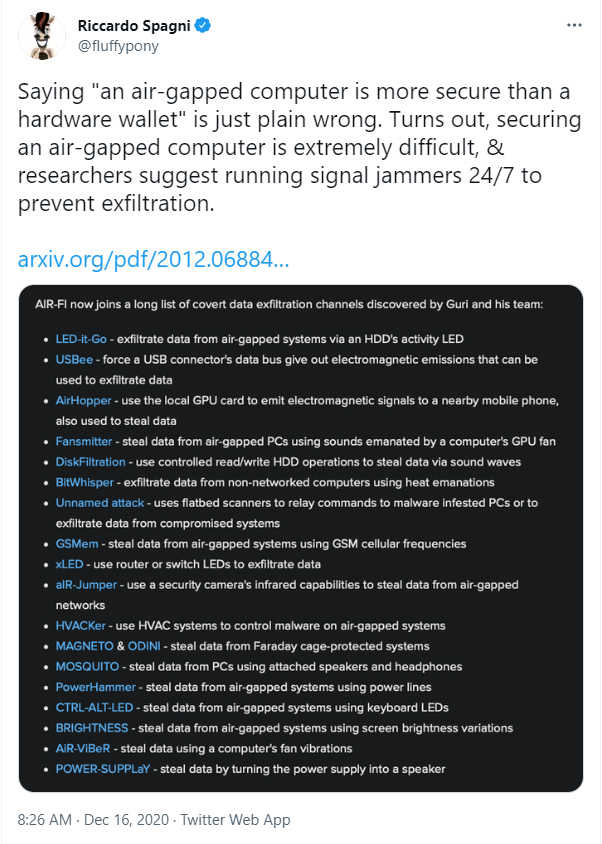

Is that so? Is an air-gapped desktop or laptop computer really “open to many attack vectors”? There’s a helpful link to a tweet, and that tweet indeed has a big scary list of attack vectors to an air-gapped computer:

(Tweet embedded as image because I’m lame and can’t figure out how to do it right - actual link to tweet is here - sorry!)

Well, look at that list and decide for yourself how many you’re vulnerable to at home - and also, decide for yourself who has the resources to implement those attacks in your home (or even in your office)?

My evaluation: Those attacks are silly in my home. Nobody is going to be hiding a pinhole camera in my home to look at the flashing LEDs on my router or keyboard or HDD. Nobody is going to be listening to the electromagnetic emissions from my USB connector or GPU and be able to separate it from the electromagnetic emissions from all the other stuff I’m running in here. Nobody is going to be getting data from my screen brightness variations or from vibrations from my laptop’s fan. These are all nice proof of concepts suitable for wowing a conference and for keeping people’s awareness open to the extraordinary. But impractical to do 3 in nearly all cases unless you’re a nation state. And if a nation state is coming after me, I’ve got bigger problems than avoiding the possibility that they are exfiltrating my Bitcoin private keys via the heat my computer emanates, and moreover: They’ve got easier, more productive, methods to use to surveille me with, and very big sticks to beat me with - including imprisonment - for the information they really want). 4

(I’m going to continue reading this article, but we haven’t even reached the “three main reasons why airgap does not do much for security in practice” and I’m already thinking that these two exaggerated and misleading claims make this less of a reference on the pros/cons of air-gapping and somewhat more of an excuse for why they don’t support airgap and don’t intend to… I am not saying that was their intent - not at all: I know they’ve thought this through and based their product on solid ground, but they might be unaware that this particular article exhibits more of a bias than they intended to put into it…)

Next up: Strawman identified and destroyed: part 3

-

Well, “garden variety” in the sense that it was similar to many other virus attacks spread through an infected USB drive. It was a bit special in that it took the resources of two nation states to develop and deliver it … ↩︎

-

I’m presuming the technicians at the Iranian nuclear enrichment plant were taught quite well and quite frequently the dangers and consequences for bringing a thumb drive into the facility and plugging it in. ↩︎

-

Also, most (maybe all) of these attacks do not apply to air-gapped computers as the communication channel almost always requires malware preestablished on the device in order to set up the communication channel via LEDs or HDD sound waves or whatever. ↩︎

-

For the record: I do not now, nor have I had in the past, nor will I in the future, any information at all that the government - any government - really wants from me (besides my ordinary 1040 every year). The FBI has no reason to look at me now nor will they ever have a reason. This just comes up as part of the discussion of a threat model; something that is not part of my threat model, but something which is part of a threat model that, judging from comments on various cryptocurrency discussion boards, is shared by a great many cryptocurrency hobbyists who think they have to protect themselves from attacks that could only be launched by a nation state coming after them individually. (It’s not really paranoid to worry about a nation state coming after cryptocurrency enthusiasts collectively, things like that have happened to various groups of people in various places at various times, including recently; I’m just objecting to the idea that the police arm of a nation state is coming after me particularly (or you particularly, for that matter) using the most advanced and unique and difficult spy technologies and resources available to them - while eschewing the easier stuff.) ↩︎